Ampere

Founded Year

2017Stage

Acquired | AcquiredTotal Raised

$780MValuation

$0000Revenue

$0000About Ampere

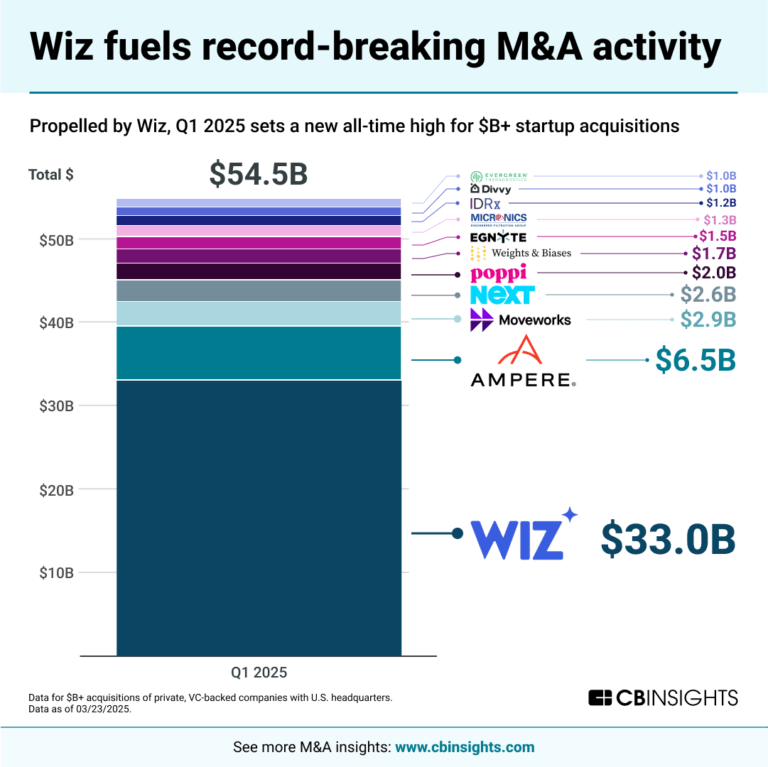

Ampere is a semiconductor company that designs processors for hyperscale cloud and edge computing. The company offers processors for data center environments and edge deployments. Ampere's products are aimed at cloud service providers and companies requiring computing solutions. It was founded in 2017 and is based in Santa Clara, California. In March 2025, Ampere was acquired by SoftBank Group.5B.

Loading...

ESPs containing Ampere

The ESP matrix leverages data and analyst insight to identify and rank leading companies in a given technology landscape.

The high-performance computing (HPC) processors market develops specialized microprocessors for high-performance computing applications including supercomputers, scientific research, and AI training. These processors feature high core counts, advanced vector processing capabilities, and optimized memory architectures to handle complex parallel computations. Key applications include climate modelin…

Ampere named as Leader among 13 other companies, including Cerebras, Fujitsu, and Advanced Micro Devices.

Loading...

Research containing Ampere

Get data-driven expert analysis from the CB Insights Intelligence Unit.

CB Insights Intelligence Analysts have mentioned Ampere in 3 CB Insights research briefs, most recently on Mar 26, 2025.

Mar 26, 2025

Nvidia’s next big bet? Physical AIExpert Collections containing Ampere

Expert Collections are analyst-curated lists that highlight the companies you need to know in the most important technology spaces.

Ampere is included in 1 Expert Collection, including Semiconductors, Chips, and Advanced Electronics.

Semiconductors, Chips, and Advanced Electronics

7,489 items

Companies in the semiconductors & HPC space, including integrated device manufacturers (IDMs), fabless firms, semiconductor production equipment manufacturers, electronic design automation (EDA), advanced semiconductor material companies, and more

Ampere Patents

Ampere has filed 141 patents.

The 3 most popular patent topics include:

- computer memory

- parallel computing

- computer buses

Application Date | Grant Date | Title | Related Topics | Status |

|---|---|---|---|---|

6/11/2020 | 4/8/2025 | Cardiac arrhythmia, Transformers (electrical), Cardiac electrophysiology, Cardiac anatomy, Wireless energy transfer | Grant |

Application Date | 6/11/2020 |

|---|---|

Grant Date | 4/8/2025 |

Title | |

Related Topics | Cardiac arrhythmia, Transformers (electrical), Cardiac electrophysiology, Cardiac anatomy, Wireless energy transfer |

Status | Grant |

Latest Ampere News

Oct 16, 2025

News provided by Share this article Uber and Red Bull Racing adopting A4 as lead customers SANTA CLARA, Calif., Oct. 16, 2025 /PRNewswire/ -- Oracle Cloud Infrastructure (OCI) today announced the upcoming general availability of A4 compute shapes powered by AmpereOne® M, the latest generation of Ampere-based compute. Launched in December, AmpereOne® M is gaining momentum, with systems available and additional designs under development with lead OEMs and systems builders. Continuing this expansion with A4, OCI becomes the first cloud provider to launch AmpereOne® M-based instances, delivering significant performance, efficiency, and cost benefits to customers worldwide. A4 builds on the success of the widely adopted A1 and A2 compute shapes—which have grown to serve more than 1,000 customers in over 65 regions. A4 shapes are expected to be generally available in November in Ashburn (IAD), Phoenix (PHX), Frankfurt (FRA), and London (LHR), with additional regions to follow. Shapes and Performance Details A4 will be available in both bare metal and virtual machine configurations. Instances scale up to 96 cores running at 3.6GHz, delivering a 20% clock speed increase over A1 and A2. With 100G networking and an expanded 12-channels of DDR5 memory bandwidth, A4 shapes are built to support demanding AI inference workloads, including large language models (LLMs). "Customers choose OCI for choice and flexibility—broad compute options and flexible shapes from small VMs to large bare metal—so they can align each workload to the right balance of performance, efficiency, and cost," said Kiran Edara, VP of Compute at Oracle Cloud Infrastructure. "Our upcoming ARM-based Ampere® A4 shape builds on what leaders like Uber and Oracle Red Bull Racing already achieve on OCI—stronger price‑performance, and meaningful power savings—and takes it further so teams can scale cloud‑native services across our global footprint, spend less, and meet their sustainability goals." Powered by AmpereOne® M, the A4 compute shapes on OCI deliver Ampere's most advanced cloud architecture to date, built to provide consistent, efficient performance across a variety of workloads. The design innovations translate into up to 45% higher per-core performance on Cloud Native workloads than OCI A2, Ampere's previous generation product. A4 is also expected to deliver 30% better price-performance compared to AMD EPYC-based OCI E6 shapes. "AmpereOne® M was designed from the ground up for cloud and AI workloads, delivering predictable performance, efficiency, and scalability," said Jeff Wittich, Chief Product Officer at Ampere. "The A4 launch at OCI gives customers access to the full potential of this latest processor, helping organizations accelerate their cloud and AI initiatives." Optimized for AI Inference The rapid scaling of generative AI demands lower cost, energy-efficient compute for AI inferencing at-scale. With AmpereOne® M's increased memory bandwidth for AI, A4 shapes are purpose-built to deliver on this challenge. Customers running small and mid-sized LLMs are already reporting improvements in Time-To-First-Token (TTFT) and Tokens-Per-Second (TPS), enabling cost-efficient CPU-based deployments for AI inference. When running Llama 3.1 8B with publicly available software stacks, OCI A4 is expected to offer a substantial 83% price-performance advantage compared to alternatives like Nvidia A10. With A4, customers can benefit from leveraging highly granular, cost-effective resources that scale for better overall performance. This approach contrasts with large and expensive solutions that often require renting the entire unit, making them more expensive upfront and per unit of work, and less elastic. To accelerate adoption of LLM workloads, Ampere has developed an AI Playground which is an easy entry point for customer adoption. Optimized software libraries and pre-built demos in Ampere's AI Playground GitHub are helping developers quickly initiate proofs-of-concept and deploy inference-ready applications. Industry Leaders Among First to Adopt Several high-profile customers are already moving workloads to A4, with early adopters secured in the US and Europe: Uber, which already runs a large portion of its capacity at OCI on Ampere, will deploy additional workloads on A4 in U.S. regions. Uber has already increased price-performance and lowered power consumption with existing Ampere-based infrastructure. The company expects up to 15% more performance from A4, along with further price-performance benefits and a lower carbon footprint. Through its partnership with Oracle, Red Bull Racing is already leveraging Ampere instances at OCI to predict optimal race strategy using their Monte Carlo simulations across billions of scenarios and outcomes. The company will adopt A4 instances in London for this use case and other AI and LLM workloads. The team expects a 12% performance boost for its race strategy simulations with A4. Oracle Accelerates Its Own Adoption Beyond external customer adoption, Oracle continues to deepen its internal use of Ampere-based compute. Fusion Applications are currently deployed on A1 and are expected to move onto A4, enabling better SaaS performance, while Block Storage is expected to join the growing list of OCI services now powered by Ampere processors. In addition, Oracle Database software development teams are actively implementing Ampere's memory tagging capability , which detects memory safety violations to prevent potential exploits. They have reported strong results with almost no added overhead when deploying this feature. Delivering high performance with almost no memory capacity penalty, memory tagging is available on all AmpereOne® Family processors, the only data center processors to do so in production today. This work is another example of how Ampere and Oracle are working together to improve the performance, efficiency, and resilience of modern applications. Setting the Pace for Cloud Performance The A4 launch reflects the growing momentum of Ampere at Oracle. Over the last two years, the adoption of Ampere-based compute shapes at OCI has grown rapidly, as enterprises seek greater performance, efficiency, and sustainability. As the first cloud instance powered by AmpereOne® M, the OCI A4 shapes extend this momentum, bringing the latest generation of Ampere innovation to cloud customers. Disclaimer All data and information contained herein is for informational purposes only and Ampere reserves the right to change it without notice. This document may contain technical inaccuracies, omissions and typographical errors, and Ampere is under no obligation to update or correct this information. Ampere makes no representations or warranties of any kind, including express or implied guarantees of noninfringement, merchantability, or fitness for a particular purpose, and assumes no liability of any kind. Where data or information is sourced from or provided by third-party partners, Ampere does not independently verify such data or information and makes no representations or warranties regarding its accuracy, completeness, or reliability, and assumes no liability of any kind related to such third-party content. All information is provided "AS IS." This document is not an offer or a binding commitment by Ampere. Price-performance calculations for A4 are based on an expectation of A2 instance pricing, as final A4 pricing remains unannounced. Actual A4 price-performance may vary. These calculations are illustrative and should not be relied upon as definitive. Ampere assumes no liability for discrepancies with final A4 pricing. System configurations, components, software versions, and testing environments that differ from those used in Ampere's tests may result in different measurements than those obtained by Ampere. ©2025 Ampere Computing LLC. All Rights Reserved. Ampere, Ampere Computing, AmpereOne and the Ampere logo are all registered trademarks or trademarks of Ampere Computing LLC or its affiliates. All other product names used in this publication are for identification purposes only and may be trademarks of their respective companies. Press contact:

Ampere Frequently Asked Questions (FAQ)

When was Ampere founded?

Ampere was founded in 2017.

Where is Ampere's headquarters?

Ampere's headquarters is located at 4655 Great America Parkway, Santa Clara.

What is Ampere's latest funding round?

Ampere's latest funding round is Acquired.

How much did Ampere raise?

Ampere raised a total of $780M.

Who are the investors of Ampere?

Investors of Ampere include SoftBank, Oracle, MACOM, Denver Acquisition, Carlyle and 3 more.

Who are Ampere's competitors?

Competitors of Ampere include EdgeCortix, Groq, Zyphra, d-Matrix, Tenstorrent and 7 more.

Loading...

Compare Ampere to Competitors

d-Matrix specializes in AI inference technology within the computing sector. The company offers a platform named Corsair, which is designed to provide low-latency processing for generative AI applications. Corsair is a solution for data centers, aiming to make AI inference viable by addressing the balance between speed and efficiency. It was founded in 2019 and is based in Santa Clara, California.

Groq specializes operates as an AI inference technology within the semiconductor and cloud computing sectors. The company provides computation services for AI models, ensuring compatibility and efficiency for various applications. Groq's products are designed for both cloud and on-premises AI solutions. It was founded in 2016 and is based in Mountain View, California.

NeoLogic specializes in microprocessor technology within the semiconductor industry. The company offers Quasi-CMOS technology, which reduces the transistor count in digital cores, leading to reductions in power dissipation and area, while improving performance-per-watt metrics. NeoLogic's technology is compatible with existing EDA tools and CMOS fabrication processes. It was founded in 2021 and is based in Netanya, Israel.

Rebellions is a company that provides AI accelerators for data centers. It offers products, including AI hardware such as cards, servers, and rack-scale systems, which support artificial intelligence applications. It is accompanied by software that is compatible with AI frameworks like PyTorch 2.x and vLLM. It was founded in 2020 and is based in Seoul, South Korea.

Tenstorrent is a computing company specializing in hardware focused on artificial intelligence (AI) within the technology sector. The company offers computing systems for the development and testing of AI models, including desktop workstations and rack-mounted servers powered by its Wormhole processors. Tenstorrent also provides an open-source software platform, TT-Metalium, for customers to customize and run AI models. It was founded in 2016 and is based in Toronto, Canada.

Quadric.io focuses on artificial intelligence computing, specifically in the domain of machine learning inference. The company offers a General Purpose Neural Processing Unit (GPNPU), a licensable processor that is optimized for on-device machine learning inference and can run complex C++ code. Its product is primarily used in the technology industry, particularly in sectors that require on-device artificial intelligence computing. It was founded in 2016 and is based in Burlingame, California.

Loading...